Building Healthcare Connectors That Survive Real-World Data

Healthcare integrations don't fail because the standards are missing. They fail because real-world data never behaves the way the standard says it should.

Here's the actual problem: your integration team spends three months wiring up an HL7 feed, gets it working in staging, and ships it to production, then watches it silently corrupt data the first time the health system updates their Epic configuration, sends Z-segments that don't match the spec, or starts populating a field retroactively three days after the original event.

After building and operating the xCaliber Healthcare Data Gateway (HDG) across EHR variants, labs, imaging systems, and revenue-cycle platforms, serving customers from seed-stage clinical AI companies to Series C platforms processing millions of records per day, one thing is clear: most connectors work in demos. Very few survive production reality.

The Real Problem: Why Healthcare Connectivity Is Broken

Before we get into connector architecture, it's worth naming the structural failures that most integration projects inherit before writing a single line of code.

- Point-to-point hell: Integrating with EHR variants the traditional way means bespoke, custom code for every new deployment. Each one is a net-new project. That's what drives 3-month integration timelines for something that should take two weeks.

- Protocol chaos: Healthcare data doesn't arrive in one format. It arrives in six protocol families simultaneously: HL7 v2/v3, FHIR R4, CCDA, X12, proprietary JSON, and unstructured documents. A connector strategy that only handles one is already incomplete.

- Dark data: Roughly 60% of EHR data is API-inaccessible. It lives in browser UIs, scanned documents, and PDF attachments that standard API integrations never touch. Clinical AI systems that rely only on structured feeds are working with less than half the picture.

- One-way flows: Legacy integration engines are fundamentally read-only. AI outputs can't be written back to the EHR. The care loop never closes. The clinical workflow never changes.

These aren't edge cases or niche concerns. They're the baseline state of healthcare integration for the overwhelming majority of health AI companies we talk to.

First Principles: What a Connector Actually Is

Before we talk about production failures, we need to align on the definition. A connector in the xCaliber HDG is not simply a protocol. It is a combination of three distinct elements:

- Source Type - what we're connecting to: Epic, Cerner, Athena, a payer network, or a browser-based UI

- Data Format - the shape of the data: HL7 v2 messages, FHIR R4 JSON, CCDA documents, unstructured PDFs

- Protocol - how the data moves: REST, TCP/MLLP listeners, Kafka, WebSocket, or direct web UI interaction

This distinction matters more than it sounds. A single EHR source often requires multiple connectors simultaneously. For example, an HL7 feed for real-time ADT events plus a FHIR API for clinical records plus an Operator extraction for data that exists only in the browser UI. Managing those as a single integration is architectural fiction. Managing them as distinct connectors, with explicit synchronization logic between them, is how you build something maintainable.

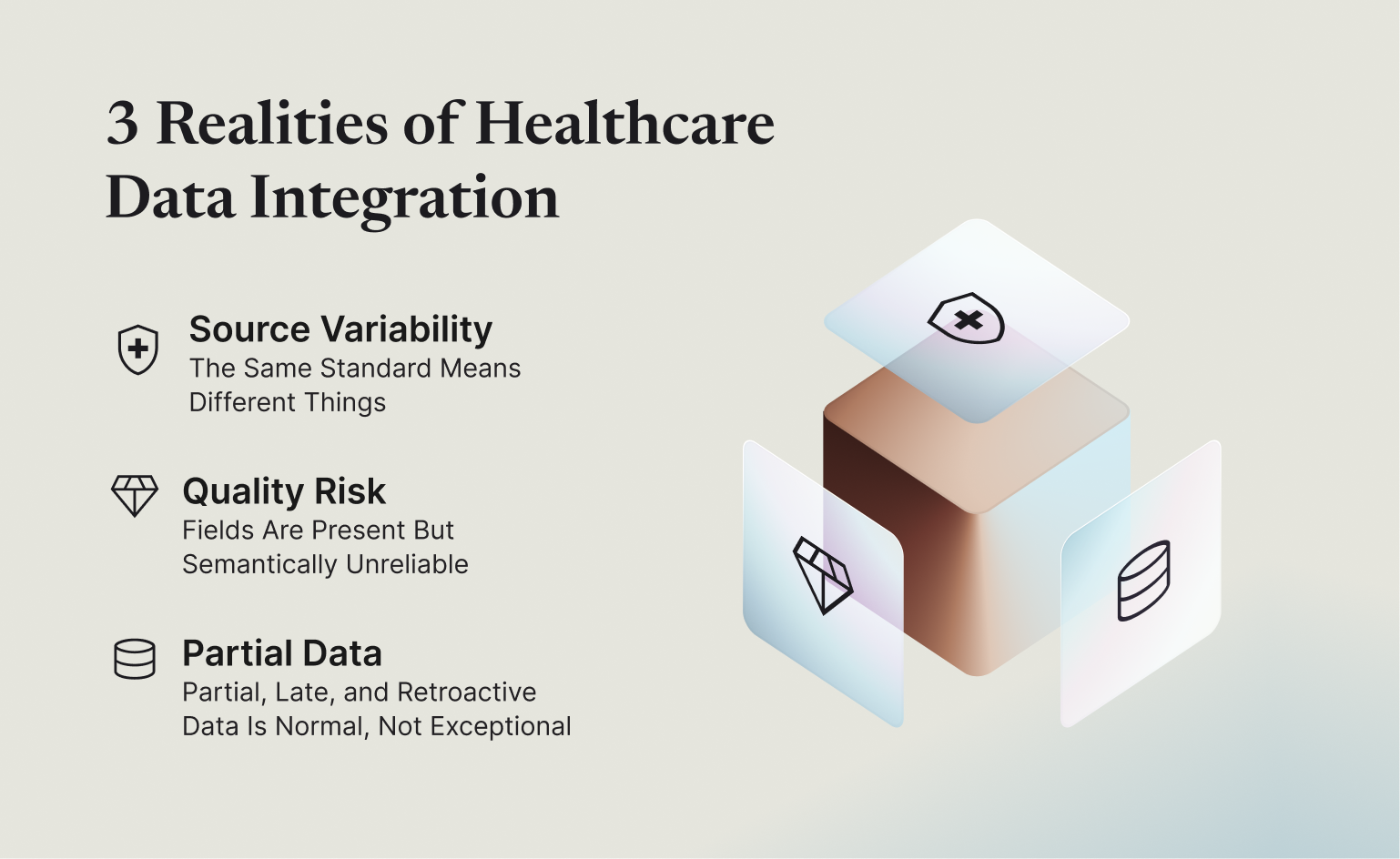

The Three Realities of Production Healthcare Data

After running connectors across dozens of real healthcare deployments, we have identified three failure modes that catch teams off guard, not once, but repeatedly.

Reality #1: The Same Standard Means Different Things

One of our customers, a health AI automation platform processing over 300,000 patient encounters per day from multiple large health systems, discovered this the hard way. Each system was sending the same type of HL7 ADT^A01 (admissions) message, but the way each system actually used it was completely different:

- PV1.2 (patient class) populated consistently by one health system on every event.

- A second system only populated PV1.2 on the first ADT event for an encounter. Subsequent events arrived with the field absent.

- A third system sent PV1.2 retroactively, sometimes correcting it days after the original admission event had already been processed.

- Z-segments were used by each source to redefine meaning entirely, sometimes overriding core fields with locally-significant values.

The downstream impact was real. Coding automation that depended on patient class fired incorrectly, producing billing codes that did not match the actual encounter type. The fix was not a data bug. It was a connector architecture problem. The connector was treating ADT as a single shape. It needed to treat source + version + customer configuration as the actual contract.

The lesson: A connector cannot treat 'ADT' as a single shape. It must treat source + version + configuration as the real contract, explicit, versioned, and testable. Anything less is a connector that works until something changes.

Reality #2: Fields Are Present But Semantically Unreliable

A digital health platform we work with, serving small and medium primary care practices across four different EHRs simultaneously, encountered a category of failure that is common but rarely discussed: fields that are present in the data but operationally dangerous to act on.

Specific examples from their production feeds:

- Identifier fields repurposed: One EHR populated the same field with both medical record numbers and insurance member IDs depending on the visit type, with no accompanying flag to distinguish which one had arrived.

- Timestamps defaulted to epoch: When appointment slots were created in bulk by an admin workflow, the system populated scheduling timestamps with January 1, 1970 rather than the actual booking date.

- Codes without value sets: Lab result codes were sent as plain text strings without standard coding bindings. LOINC is the industry standard terminology for lab and clinical observations, and without it, the codes were structurally present but semantically unusable for any downstream analytics or care gap logic.

- Enums as free-text strings: Medication status fields arrived as free-text strings: "active," "Active," "ACTIVE," and "still taking," all meaning the same thing, but all requiring normalization before any downstream comparison could work.

The failure pattern in naive connectors is to treat field presence as usability. If the field exists, the assumption is that it can be used. In healthcare, that assumption is frequently wrong. Every field exists in one of three states that a production connector must track explicitly: it is structurally present in the message, it carries a meaning that can actually be acted on, and it is safe to use downstream without risk of corrupting a record.

The lesson: Collapsing those three states into one is where silent data corruption begins. Your connector must distinguish between a field that is present, a field that is meaningful, and a field that is safe to act on.

Reality #3: Partial, Late, and Retroactive Data Is Normal, Not Exceptional

A clinical AI company we support, automating provider inbox workflows across multiple EHR environments, ran into this pattern early in their deployment. Their AI needed a complete picture of a clinical encounter to take action. What the EHR actually delivered looked like this:

- Encounter created: patient demographic and appointment data arrives.

- Clinical note added: four hours later, after the provider completes documentation.

- Lab results arrive: the following morning, after the external lab returns results.

- Insurance class corrected: two days later, when billing identifies a coverage mismatch.

- Discharge summary finalized: three days after the encounter for complex cases.

A connector built to treat data as forward-only, where the first message is assumed to be the final truth, will corrupt the record. Timelines become wrong, patient state becomes stale, and downstream AI models fire on incomplete data.

This is not an edge case. For healthcare organizations managing payer contracting, prior authorization workflows, or multi-system environments, late and retroactive data is the default state, not the exception.

The lesson: Every connector must assume that the first message is rarely the last word. The ability to handle updates, corrections, and out-of-order arrivals is not an optional feature. It is a baseline requirement for correctness.

Why Naive Connectors Fail in Production

Most first-generation healthcare connectors, including many built in-house by engineering teams at health tech companies, share a predictable set of failure traits that trace directly back to the three realities above.

- Hardcoded field mappings that don't survive EHR version upgrades or customer-specific configurations.

- Inline transformation logic tightly coupled to downstream schema assumptions, so a change in one consuming system requires touching the connector.

- No model for "unknown" or "pending" data, every field is either populated or treated as missing, with no distinction between intentionally empty and not-yet-arrived.

- No idempotency: Resends, replays, and retroactive corrections produce duplicates or corrupted state.

- No observability when a field arrives wrong, there's no way to trace why a particular value was produced or skipped.

These connectors work until a source upgrades, a new customer enables a feature flag, or a field starts arriving inconsistently. Then everything breaks, silently, with no paper trail to diagnose it.

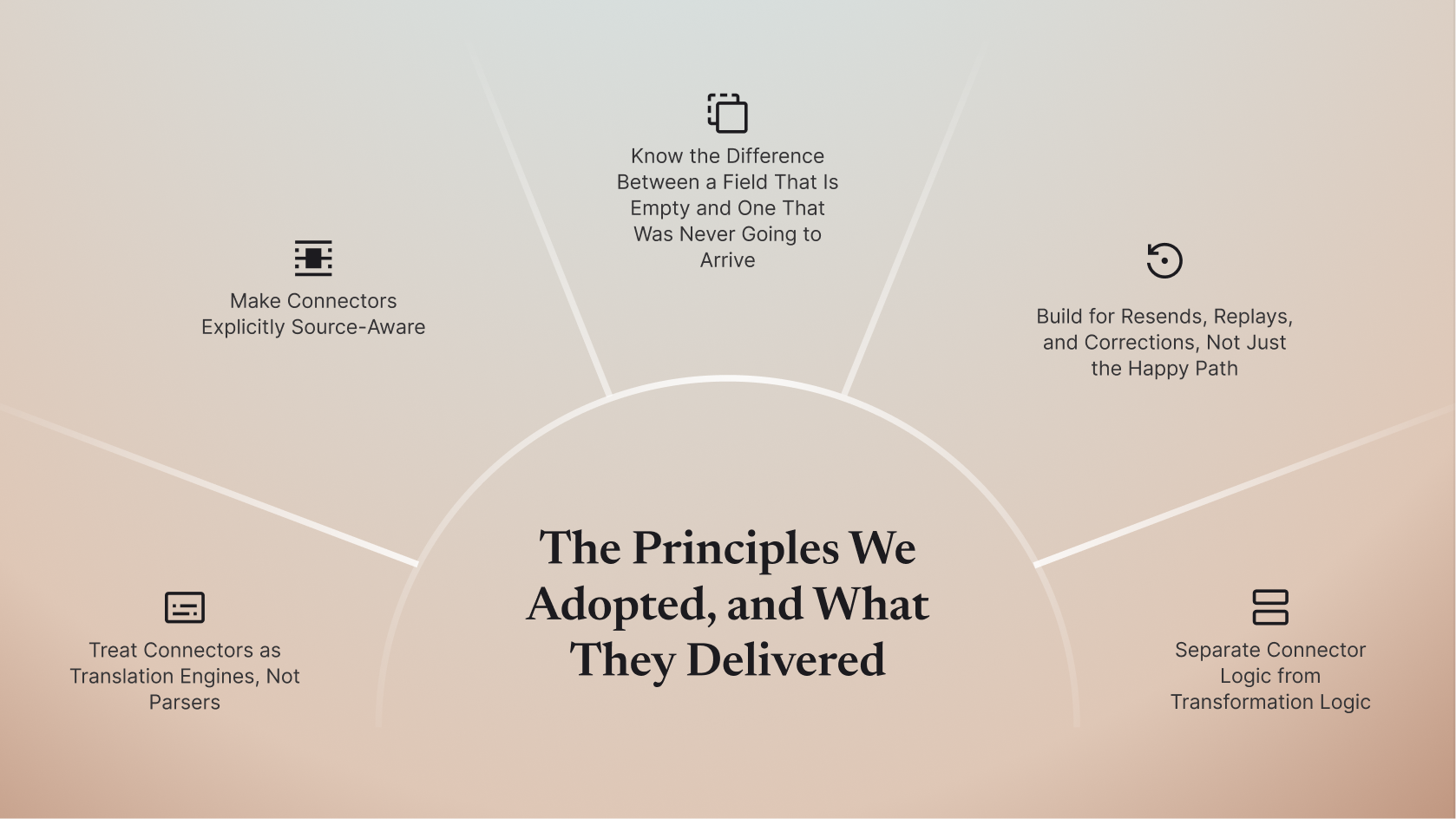

The Principles We Adopted, and What They Delivered

Principle #1: Treat Connectors as Translation Engines, Not Parsers

A parser converts a message from one format to another, HL7 to JSON for example. A translation engine does something more: it interprets source behavior, normalizes inconsistencies, preserves uncertainty, and emits data with explicit intent. That distinction sounds subtle. In production, it is the difference between a connector that works and one that survives.

For our health AI automation customer processing six million API calls per day from multiple Epic HL7 feeds, this principle meant two things in practice. Parsing was separated from interpretation: capturing what the message said came first, and determining what it actually meant for this specific source came second, applied based on known source behavior. Out-of-order and incomplete records were expected, not exceptional. The connector tracked the history and timing of every update so that retroactive corrections landed in the right place in the record, not as duplicates or overrides that corrupted the timeline.

The Impact: Two large health systems and approximately one million patients were brought live within a hard deadline that a bespoke integration would have missed by months. Coding automation became operational ahead of schedule, unlocking revenue five times faster than the alternative integration timeline, with sub-second query performance at millions of records per day.

Principle #2: Make Connectors Explicitly Source-Aware

A connector must know which system it is talking to, which version it is running, which configuration is active, and which tenant-specific behaviors are enabled. This is not metadata to figure out later. It is part of the connector's identity.

For our value-based care platform customer connecting to four EHRs simultaneously, Elation, Athena, eClinicalWorks, and Epic, source-awareness was what made the difference between a working integration and a maintainable one:

- Each EHR connector was versioned independently. When Athena updated their FHIR surface, only the Athena connector configuration changed. The downstream data layer, the customer's application logic, and the other three EHR connectors were untouched.

- Behavior changes were explicit, not discovered in production. When one EHR started sending events out of sequence due to a software update, the change was captured in the connector's source profile rather than surfacing as a data anomaly weeks later.

- Mappings were contextual, not global. The same FHIR field meant different things in different EHR contexts, and the connector expressed that explicitly rather than flattening it into a single interpretation.

The Impact: What had previously required many months per EHR integration was compressed to weeks, with a significant reduction in engineering effort and integration costs. The team redirected that capacity to the product features that actually differentiated them in the market.

Principle #3: Know the Difference Between a Field That Is Empty and One That Was Never Going to Arrive

One of the most operationally damaging problems in production healthcare data pipelines is the inability to answer a simple question, “Why is this field empty?”

For our clinical AI customer processing 160,000-plus clinical documents per month, including lab reports, clinical notes, and care summaries, this principle was essential to building a system that auditors and clinicians could trust. The connector distinguishes between three distinct scenarios:

- Field not provided by this source: the EHR simply does not populate it, and the connector records this explicitly so downstream models know not to interpret the absence as a clinical negative.

- Field provided but not for this event type: the field exists in other contexts but is not expected here, so the connector signals this so AI logic does not wait for data that will never arrive.

- Field intentionally not hydrated: the source populates it in some configurations but not this one, and the connector captures that context so the absence is explainable and auditable.

The Impact: Every piece of processed data carried a complete audit trail with full replay capability. If clinical reviewers asked why an automated decision was made, or not made, the answer was traceable back to the exact data state at the time of processing. Business outcomes became explainable and defensible.

Principle #4: Build for Resends, Replays, and Corrections, Not Just the Happy Path

Healthcare feeds will resend messages. They will reorder events. They will replay historical data during maintenance windows, EHR upgrades, and integration testing. If your connector is not built to handle this, a property engineers call idempotency, meaning the same message arriving twice produces the same result rather than a duplicate, you will corrupt data, create duplicate records, and lose trust, in that order.

For our health AI automation customer receiving 300,000 encounters per day from multiple concurrent HL7 feeds, this principle prevented a significant data quality failure during a planned Epic upgrade window:

- The source system replayed approximately 48 hours of historical admission messages during the upgrade to ensure downstream systems had current state.

- Because every connector operation was built to handle re-delivery cleanly, the replay was absorbed without incident.

- The downstream coding system saw no duplicates, no corrupted encounter timelines, and no billing anomalies.

The Impact: Zero duplicate records during a 48-hour historical replay event. No manual remediation required. Billing integrity maintained through a full EHR upgrade cycle. Without idempotency, tens of thousands of encounter records would have required manual remediation.

Principle #5: Separate Connector Logic from Transformation Logic

A common architectural mistake is embedding mapping logic, business rules, and target-schema assumptions directly inside the connector. This creates fragile upgrades, hard-to-test behavior, and tight coupling that makes every change expensive.

The xCaliber approach enforces strict separation: the connector's responsibility is to understand the source. The transformation layer's responsibility is to shape data for targets.

In practice, this separation is what allows the platform to support 90+ EHR variants across a single normalized FHIR++ output surface, without maintaining 90 separate downstream transformation pipelines. When customers add a new data consumer, a new analytics product, a new AI model, a new payer integration, the connector layer doesn't change.

The Impact: 90+ EHR variants. One normalized FHIR++ output surface. Zero per-consumer connector changes when downstream requirements evolve. The connector layer is stable; the transformation layer adapts.

The XCaliber Differentiators Worth Knowing

Bidirectional Write-Back

Legacy integration engines are fundamentally read-only. xCaliber pipelines process data in reverse: accepting inbound FHIR bundles, mapping them to the source-system's native format, and writing workflows, clinical notes, orders, care plan updates, directly back to the EHR. The care loop closes. AI outputs become EHR inputs.

Cross-Connector Data Sync (CCDS)

A single EHR source often requires multiple connectors simultaneously, an HL7 feed for ADT events, a FHIR API for clinical records, an Operator extraction for UI-only data. CCDS automatically synchronizes and deduplicates data across all connectors for the same source before anything moves downstream. Applications receive a coherent, deduplicated record, not a collision of partially-overlapping feeds.

The EHR Operator

About 60% of EHR data is API-inaccessible. It lives in browser UIs. Rather than accepting that limitation, we built an agentic UI connector, the EHR Operator, that uses deterministic extraction logic (not brittle RPA screen-scraping) to navigate browser interfaces, extract data, and output it as structured FHIR++. Every session is fully observable and replayable via XC Studio.

Four Sync Modes

Connectors support four operational sync modes, selectable per connector:

- Bulk: historical extraction for initial data loads or backfills

- Incremental: delta-based sync for ongoing data refreshes

- Real-time CDC: sub-minute change data capture for event-driven workflows

- Aggregated / CCDS: multi-connector synchronized output for unified data views

No-Code Configuration via XC Studio

Connectors are managed through XC Studio, the xCaliber control plane. Teams can enable or disable connectors, set rate limits, apply policy changes, and inspect execution timelines at runtime, without code deployments. Observability is surfaced at every layer: per-feed throughput, latency, SLA tracking, full input/output inspection at each processing step, dead-letter queue visibility for failed messages, and session replay for Operator extractions.

When an auditor asks why a particular clinical decision was made, or not made, the answer is traceable. In healthcare, that's not just an engineering concern. It's a compliance requirement.

Observability: If You Cannot Explain It, You Cannot Trust It

A production-grade healthcare connector must be observable at the message level, the field level, and the decision level. You need to be able to answer: why was this field populated? Why was it skipped? Why did this update override a previous value?

In healthcare, this is not just an engineering concern. It is a compliance requirement. Auditors do not accept "the system did it" as an explanation. Clinical AI companies need to demonstrate that their models acted on accurate, complete data. Revenue cycle platforms need to show that their coding recommendations were based on a clean record.

This is what XC Studio, xCaliber's operational control plane, is built to surface. At every layer of the pipeline, you can see per-feed throughput, latency, and SLA performance; inspect the full input and output at each processing step; review messages that failed to process before they disappear from view; and replay past sessions to understand exactly what happened and when. Every decision the connector made is traceable, and that trace travels with the data through the full lifecycle. In healthcare, that level of visibility is not a nice-to-have. It is what makes automation defensible.

Final Takeaway

Healthcare standards reduce chaos. They do not eliminate it. Every real-world deployment introduces variations that no specification anticipated: sources that implement standards differently, fields that carry meaning they were never designed to carry, and data that arrives late, out of order, or not at all.

The connectors that survive production are not the ones that assume clean input. They are the ones built to expect variation, track uncertainty, handle corrections, and explain every decision they make.

The xCaliber approach, treating every source as a unique behavioral contract, modeling data states explicitly, building for re-delivery and replay from the start, separating connector logic from transformation logic, and surfacing full observability at every layer, is what allows a single platform to absorb the full diversity of production healthcare data without breaking.

A connector that only understands the standard will fail. A connector that understands the source will survive. At xCaliber, we build for the source, and the results are in production.